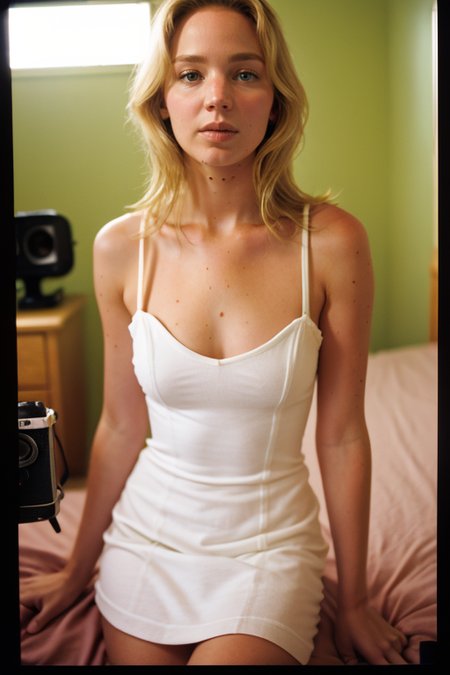

A lora of Jennifer Lawrence trained on images from 2011-2023.

Trigger Word: jennlaw

CFG: 6-30 (with mimic enabled) worked for me fine.

Resolution: Tested from 512x512 to 1024x1024

Checkpoint: Tested on Epicrealism

It looks like the generation makes a few too many moles on her neck. I have yet to find a negative prompt to pull that in a bit.

Description

FAQ

Comments (1)

What is your lora training process? I'm trying to make a lora but they don't turn out as well as yours do. I'm using a dataset of ~75 decent-good quality images, captioned by BLIP, and using regularization images as well. Also, if it matters, I crop the images to 512*512 before using them to train. It seems as if my Lora's are overtrained because I get a lot of artifacts when I put the lora strength at 1. I'm wondering if I should decrease my dataset and improve captioning? Or perhaps tune the network rank/alpha/learning rates? Should I use buckets? Do you have any advice?