Controlnet QR Code Monster For SD-1.5

Model Description

This model is made to generate creative QR codes that still scan. Keep in mind that not all generated codes might be readable, but you can try different parameters and prompts to get the desired results.

More info at https://huggingface.co/monster-labs/control_v1p_sd15_qrcode_monster

Description

Complete retraining of the model on more and better data

Gray gives now more freedom to this portion of the image

FAQ

Comments (64)

What is the systm requirement for this

Don't really know, same as other Controlnets, so I guess a bit more than normal SD-1.5

Neat. Something I noticed: Google Lens will do an image search on these, while dedicated QR apps read the HF link just fine.

Yeah basically the problem is that general-purpose object detection apps will first use an object detection model that tries to find something vaguely looking like a QR code, if it finds one tries to read it. This doesn't look like a QR code, so it never tries to scan it.

Normal QR code reader apps will just start scanning directly so no problem.

@achiru I get that. Just figured it was worth pointing out, since my default answer to people who say "I don't have a QR scanner" it to tell them that their phone's built in camera app can do it.

What do you mean by module size of 16px? And what about the gray background you mention? The background of the QR code should be gray? I think seeing the exact settings you used for the examples would help many to find a good starting point.

The "pixels" of a QR code are called modules, my model is optimized for qr codes with this size of 16px.

you put your code into a bigger image (let's say 512x512, or 768x768). So this image should be solid gray before you put the code on top.

@achiru I haven't seen a QR code generator that lets the user choose 16px for the module size. The closest I found is https://keremerkan.net/qr-code-and-2d-code-generator/ which has 15.

So this shouldn't be used with txt2img, but img2img with a blank gray image as the starting point? It doesn't seem possible to start with a solid gray image in txt2img.

@theunlikely you can use this for example:

https://gchq.github.io/CyberChef/#recipe=Generate_QR_Code('PNG',16,0,'High')&input=Y29udGVudF9oZXJl

then just paste it onto a gray image of your choice

This is the input for the controlnet model. Nothing to do with img2img

@achiru So like this? https://i.imgur.com/M3gRXE0.png

@achiru Thanks for the link to the generator that allows customizing module size. The QR codes it makes aren't transparent though, so the gray background would be completely covered right?

Hi, great work! I would also be interested in understanding the bit with the "gray" image in the background. Is it pasting the qr code on an image with gray background ? Why exactly is that ? Thank you.

@achiru Hmm, I'm unable to get this to work... In the ControlNet panel, what Control Type should I choose? All, Canny, Depth, etc

@poyuuu I just posted instructions with images here: https://civitai.com/posts/422330

@theunlikely I just posted an example here https://civitai.com/posts/422330

@mouseyresidence327 example here: https://civitai.com/posts/422330

As to why, well that's because I trained the model to recognize gray as a no-constraints zone. So it will do whatever looks the best where you input gray.

@achiru Thanks a bunch!!

I really like this controlnet and I have gotten it to work.

But I am trying to understand how you are creating images that the qr code is so artistically incorporated.

Mine seem to have the QR code as more of an after thought.

I am trying to reproduce a couple of the images you include in this page and I am not getting near what you got.

You mention that you are using realisticvision_v40,

but when I click on the image and look at the info it is showing realisticvision_v20, I have tried both of these with the data from your images and I still don't get close to your image.

Would you please give me the specifics that you actually use to generate this.

Everything should be included in the images metadata, including the controlnet settings. A good starting point is the settings I show in this post https://civitai.com/posts/422330 .

You should play with the start and weight settings to get what you want. But hard to say without knowing what you're using now.

Also make sure your controlnet image input is of the same size as the image you're generating. If not, pad the code with gray to get the right size (you can try my input in the post linked above, for 768x768 images).

@achiru I tried to get the image metadata and I am not seeing it. I am more used to getting the settings from .png, but I looked at the exif info on the .jpg and didn't see anything.

I am currently using 1.2 for the controlnet weight and .3 for start an .95 for end.

I am using realisticVision_V40 with the included VAE.

I have gone as high as 1.6 for the weight and as low as .15 on the start.

I am getting better images now. I guess it depends on the prompt. Some don't see to incorporate the QR code as well.

I have been using a 700x700 qrcode for a 768x768 image, I looked at your qrcode and thought that was what you did. Also I started using the QRcode generator that you recommended.

@mishafarms I didn't know civitai didn't provide the original png, in that case you can't access my OG metadata.

But you should have exactly the same size for your images, 700 and 768 won't do.

Does this work on Easy Diffusion? I looked up how to use the control net, and I don't think I understand the instructions, possible because the options aren't available in Easy Diffusion beta yet. I'm using this as a control net without a filter, and I'm not getting results that are anything like a QR code.

I don't see controlnet options listed in the official Easy Diffusion instructions, where did you find that?

@achiru I'm using Beta options, so the documentation I can find is here: https://github.com/easydiffusion/easydiffusion/wiki/ControlNet

It is supposed to work with just the controlnet and no filters (and of course the input image), if it doesn't do anything it's probably a problem with your settings or with easydiffusion

You'll need the control_v1p_sd15_qrcode_monster.yaml file from here https://huggingface.co/monster-labs/control_v1p_sd15_qrcode_monster/tree/main place it next to the controlnet file and name it the same (both need the same name not the same extension)( example: qr.safetensors & qr.yaml will work just fine)

Then just set to Euler A, 50 steps, 7 guidance, no negative prompts (at least for my experience) and it should work just fine. Here's one I made: https://ibb.co/nCSWJNT hit me up on Discord for help

@_o_w_l thank you

M1max 64ram here : I get this :

User specified an unsupported autocast device_type 'mps'

I can use realistic vision 5.1 without any issue but when I add qrCode monster, impossible. I am using Comfyui. Thanks

I don't think this is a problem with my model, you'll probably have the same issues with any other controlnet

That's because autocast isn't supported on Apple's backend, amazingly enough.

As for fixing it, you usually need to change the attention model you're using when you start comfy... usually.

I think Quadratic (the default) was the only one that worked for me when I had this happen on the DirectML... until I added a hypernetwork too and that one called autocast from another attention function and I finally deleted all the autocast blocks.

@GnomeExplorer I don't think I would know how to do that myself but happy to watch any tutorial if you have one, thanks

@kameleo333 It should be fixed now anyway but if it crops up again, some command line options you can try when you run main.py since autocast is normally used to upcast fp16 -> fp32 during attention and CLIP :

You can try the 3 different attention models one at a time and see if one of them gets through. The difficult part is that some custom nodes added their own attention types that won't respect the datatype flags or don't upcast but you should be fine with just the controlnet.

--use-split-cross-attention

--use-quad-cross-attention

--use-pytorch-cross-attention

If that doesn't work, try

--fp32-vae --force-fp32 (mac should run in fp32 normally anyway the way comfy configures it... if you don't specify , but it might be using fp16 for something)

the above should have done it but who knows, again

or

--dont-upcast-attention prevents the attention models from using autocast (I think) which would eliminate this the next time a new attention type shows up. I've seen quite a few in the past 3 months, it's evolving fast.

If those don't work file a bug on the git :-)

Are you planning to update for SDXL since Controlnet is merged for controlnet

I hope so as well

Working on it but I still have issues with the result

@achiru Awesome work mate! Can't wait for SDXL version! You are a legend!

How can I run this? :)

Here is a tutorial,

Just use the latest version.

Now it's V2 =)

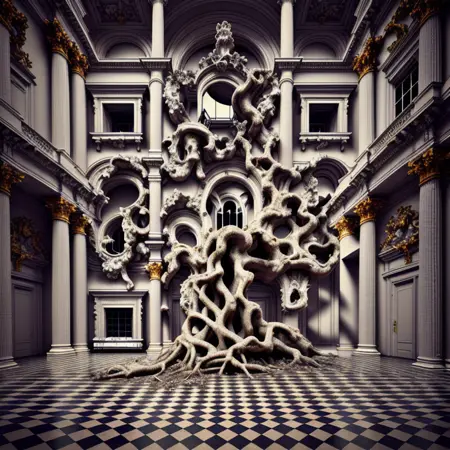

Can you please Upload this Image here with your Prompts? Thats would be Great. Thank you.

https://huggingface.co/monster-labs/control_v1p_sd15_qrcode_monster/blob/main/images/tree.png

I don't think I still have the parameters for this one, but it was about the same as the ones on this page, with the code placed at the bottom of a gray image of size 768x1024

For the 3 landmarks, I had a specific workflow, I'll see if I can still find it

How would I incorporate this into a ComfyUI setup where I'm already running SD?

I had some success implementing it as a Controlnet node reading model from the checkpoint and throwing the control_net to an Apply Controlnet (adv) node that is also receiving the image of the qr code from a Load Image node. From that, you combine the Apply Controlnet node with an empty latent image into a K Sample and voilá. You will need two clip text encodes between your load checkpoint and the Apply Controlnet node, of course, for prompts. I hope it works for you as well

This is a great model! Thank you guys for all your work. Looking forward for SDXL models too.

I really like this model but I have a hard time to make the generated images scannable. Can you provide some config values to get better results?

I've been testing alot, but only images which quiete look as qrcodes are scannable. I want to get some scannable art :)

You have to go for simple styles. Anything too much and most QR scan apps won't be able to take it. 99% of the created images you see here are unusable for scanning but they do look pretty

Hopefully this helps someone else. QR Code Helper.

Thanks - what does this file do?

Any possible way to do this for sdxl ?

Working on it

There is no workflow in any of these images. And what do I do with the yaml file? Maybe it's for A1111?

These were made using automatic's ui, but I have a comfyui compatible version here https://huggingface.co/monster-labs/control_v1p_sd15_qrcode_monster/blob/main/v2/diffusion_pytorch_model.safetensors

You can just use a normal sd1.5 controlnet workflow.

Anyone know why it is flipping my "Image" from black to white so the hidden image is white?

This makes it hard to read.

You can also make cool WiFi QR Codes with this

WIFI:S:HEREGOESTHEWIFINAME;T:WPA;P:PASSWORDOFWIFI;;

Just edit the 2 with heregoes the wifi name and for the password of wifi as well, and create a QR code.

i need this too

Use quik QR art

It's a shame there's no standard for hashing of the password in WiFi QRs (or at least I couldn't find one when I was generating a bunch last year).

The codes for wifi will usually end up fairly long because of the length of text and error correction so to reliably get codes working that didn't overwhelm the generated image my strategy was to set up one controlnet that at a strength < 1 and a range of about 75% of the steps for the original image... the output from this can sometimes scan but most of the time if you've got a complicated prompt or a bunch of LoRAs some of the important areas will change into objects too light to provide constrast.

Then upscale it with a NN latent upscaler to avoid a VAE messing with contrast and run a second QR monster controlnet on it for about 50% of the second ksampler @ strength of 1.05-1.15 with a noise reduction level of something around ~33-55% depending on the CFG and lora chain. Running it for 50% reestablishes any dark areas that got obliterated by the character lora and prompt during the low res generation just enough that the remaining steps will keep them around but fade them a bit or transform them into other objects and you'll get something that scans about 90% of the time but isn't immediately obvious as a QR.

It's a little slower this way from running two controlnets but otherwise you end up with too many generations that need to be manually fixed after upscale and the QR tends to get really obvious if you resort to that.

Hi, you are well done!

Can you try to make Stereogram with qrmonster?

now make the qr code animated and scannable :D

could you please make this for Flux? kthxbai

doesn't work at all, completely useless. You'll get better results with basic controlnets.

Details

Files

qrCodeMonster_v20.safetensors

Mirrors

qrCodeMonster_v20.safetensors

qrCodeMonster_v20.safetensors

qrCodeMonster_v20.safetensors

qrCodeMonster_v20.safetensors

control_v1p_sd15_qrcode_monster.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

controlnetQRCode_sd15V1.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

qrCodeMonster_v20.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

qrCodeMonster_v20.safetensors

qrCodeMonster_v20.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

v2_control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

qrCodeMonster_v20.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster_v2.safetensors

control_v1p_sd15_qrcode_monster.safetensors

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.