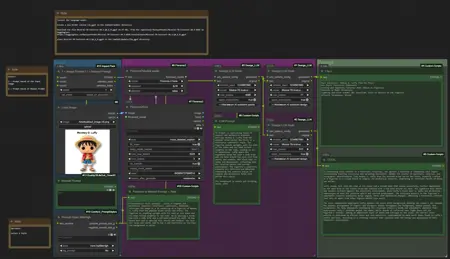

ComfyUI Prompt Flux/SD3 Generator

This workflow offers a completely self-contained solution for generating and optimizing prompts for both CLIP-L and T5XXL, running entirely within ComfyUI without requiring any external APIs or Ollama.

Key Features

Dual Input Methods: Process images using Florence-2 Vision Language Model or input custom prompts manually

Fully Local Processing: All language models run directly within ComfyUI

Zero External Dependencies: No need for API keys or Ollama setup

Technical Architecture

The workflow utilizes three locally running Mistral-7B-Instruct models in sequence to:

Generate detailed descriptions

Optimize prompt structure

Format specialized outputs for CLIP-L and T5XXL

Components

Florence2 custom nodes for image analysis

Searge-LLM custom nodes for running Mistral-7B locally

Custom prompt styling and optimization scripts

Integrated CLIP-L and T5XXL output formatters

Searge LLM Installation Guide

Create directory:

mkdir ComfyUI/models/llm_gguf

File: Mistral-7B-Instruct-v0.3.Q4_K_M.gguf (4.37 GB)

Place in: ComfyUI/models/llm_gguf/

Description

FAQ

Comments (8)

amazing, almost as good as mine😜😅, does running it locally mean mistral is uncensored or do you run in to that censorship thing also?

The vision model of Florence_2 is censored.

However, Mistral is uncensored. If you set the switch to "2," you can also create uncensored prompts using the manual prompt option.

@denrakeiw hmm I can run Mistral 7B 0.3 on my ollama aswell, ima try that next

Hi, the workflow tells me that it can't find "SDXLPromptStylerbyMileHigh", can you give me the link to download the node? Thanks

@denrakeiw Thank you!

Is it possible to use this workflow to generate prompts for other models like Qwen Edit etc?