Disclaimer

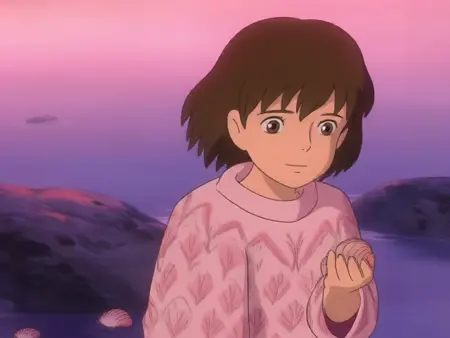

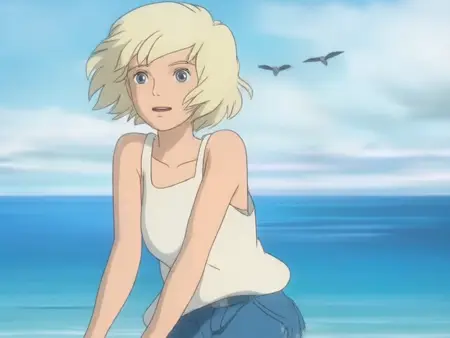

Although base HunyuanVideo knows generic anime style well without need for LoRAs and has some knowledge about Studio Ghibli's art style, the latter is not consistent, very prompt-dependant and can sometimes fall back to realistic style. And the shading, palette and linework can be quite different. So by making this LoRA I wanted to try to reinforce the Ghibli art style for HunyuanVideo.

This is the third version of the LoRA. The first two versions were not successful, I did not publish them.

upd. 01/08/2025 Unfortunately, I got no spare time to work on older models, so retraining this one isn't planned any more.

upd. 14/03/2025 After testing Wan2.1-14B-T2V for a week, I must acknowledge that it is superior to HV. Therefore, I will shift to Wan training and do not plan to release any more HV models. However, I'll make my best to release an update to Ghibli LoRA (once I'm done with some of my other planned Flux/Wan models), because I still feel obligated to finalize it with proper training using videos, not just images.

upd. 02/03/2025 I got carried away with Lumina-2 and Wan-2.1 and got back to Flux training again, so v0.7 will be slightly postponed. But I will definitely release it (probably along with one more anime LoRA).

upd. 08/02/2025 v0.6 was a disappointment. I made several risky decisions that did not justify themselves and weren’t worth the 84 hours of training on an RTX 3090. Stay tuned for v0.7! 🙂

upd. 05/01/2025 Done training v.0.4 with musubi-tuner, but it was worse than v0.3, so I won't publish it (and will use diffusion-pipe for v0.5).

upd. 21/01/2025 I made too much mistakes while training v0.5, so I decided to drop it and start from scratch with upgraded dataset and training parameters (and try musubi one more time). 32 hours wasted, but it's for good :)

Usage

For inference I use the default ComfyUI pipeline with just an additional LoRA loader node. Kijai's wrapper should work too (at least it worked a week ago, but after that I switched to native workflow). A parameters are default except:

guidance: 7.0

steps: 30That does not mean they are optimal, it's just I mostly generated clips using them, but maybe some other combinations might deliver better results.

The prompt template I am currently using is like this:

A scene from a Studio Ghibli animated film, featuring [CHARACTER DESCRIPTION], as they [ACTION] at [ENVIRONMENT], under [LIGHTING], with [ADDITIONAL SETTING DETAILS], while the camera [CAMERA WORK], emphasizing [MOOD AND AMBIANCE].I usually input a set of tags to LLM, like "blonde woman, barefeet, ocean seashore, fine weather, etc." and ask to output a cohesive prompt in natural language according the this template.

Training

Please have in mind that my training routine is not optimal, I am just testing and experimenting, so it's possible it worked not because it is good, but despite being bad.

Current version of LoRA is trained on 185 fragments (512x512) of screencaps from various Ghibli movies. They were captioned with CogVLM2. Captioning prompt was:

Create a very detailed description of this image as if it was a frame from Studio Ghibli movie. The description should necessarily 1) describe the main content of the scene, detail the scene's content, which notably includes scene transitions and camera movements that are integrated with the visual content, such as camera follows some subject 2) describe the environment in which the subject is situated 3) identify the type of video shot that highlights or emphasizes specific visual content, such as aerial shot, close-up shot, medium shot, or long shot 4) include description of the atmosphere of the video, such as cozy, tense, or mysterious. Do not use numbered lists or line breaks. IMPORTANT: output description MUST ALWAYS start with unaltered phrase 'A scene from Studio Ghibli animated film, featuring...', and then insert your detailed description.For training I used diffusion-pipe. Other possible choices are finetrainers (it currently does require > 24GB VRAM to train HV), musubi-tuner (I yet failed to get good results with it, although it's not the software's fault) and OneTrainer (but I never tried it).

Training was done on Windows 11 Home (WSL2) with 64 GB RAM, on a single RTX 3090. Training parameters were default (main, dataset), except:

rank = 16

lr = 6e-5I saved on each epoch and got 20 epochs, each took 462 steps, so 9240 in total. The speed on RTX 3090 was approximately 7 s/it (so, each epoch took slightly less than 1 hour to train). After testing epochs from 13 to 20, I chose epoch 19 as it was most consistent and gave fewer errors.

The result is still far from perfect, but I hope to deliver upgraded versions. Next version will probably be trained on clips instead of images, but I need time to prepare the dataset.

Also it is quite possible that upcoming I2V model will render style LoRAs useless.

P.S.

I'm still amazed we got such an outstanding local video model. I feel like now it's really a Stable Diffusion moment for local video generation. No doubt we will get more models in the future that will surpass it, but HunyuanVideo will always be the first of its kind, at least for me ❤️

Description

FAQ

Comments (26)

Looks Amazing!

How About Murakami Teruaki AKA (Gold Bear)

His style is interesting 😳, but I currently have no plans for this, sorry.

Wonderful description and lora. Thanks.

😍😍😍

Nice work!

Nice looking Lora and you posted a ton of examples with prompts. Megachad status!

and it takes half of the space of a normal hunyuan LORA!

Yes, it's a rank 16 LoRA, while the default value in diffusion-pipe config is 32.

I’m not entirely sure if this was the correct decision, as I’m still experimenting with different settings. For the next version of LoRA, which will be trained on videos, I might select a higher value.

@seruva19 Do it overnight, twice with the same dataset, one at 32, one at 8; compare results. I notice you only have a 5mb dataset, I'm no expert in training video loras cuz I don't have a million dollar PC, and refuse to support corporate services(when I can help it). But, I train SDXL/Pony/Illustrious loras using 65ish MB in images(85-250ish images), and at 16, that produces fine results, but it's a lot more data, and/or higher quality data. Unless video differs greatly in training, with only 5mb, rank8 should do Ok.. assuming the concept isn't rendered exponential by the addition of linear movement.

@Lazman Thanks for your insights. I agree with you, the dataset is a weak point of this LoRA, it was my first attempt of training video model, and it could be much better with a more diverse dataset. When I have some spare time, I'll retrain it using videos, I've gained some experience since creating this LoRA, and now I think I know how it should be done. Although, it seems HV is pretty much done at this point.

@seruva19 I've been working with AI on my own for several months now, and just started into video in the past couple days, though I did try LTX a week or two ago, and wasn't really impressed with that, especially in the IMG2VID. It produced results that looked like a bad 90's shockwave flash animation drawn by a child.

That said, much better results with this one in IMG2VID(though I still gotta figure out if you can actually do action in IMG2VID, or just pan/zoom in/out of a.. wobbly character, lol). If I just wanted to generate the typical stuff, porn, especially having to do with women, or sexualized women in general, I'm sure txt2vid would work great, especially on hunyuan with all the loras. But for anything unique, you kinda require IMG2VID, or a 5000$++ PC(3000++ just for a video card with over 16gb vram..) to train video loras. But if you know how to train em on 16, I'd love to hear it. I haven't really looked into it yet due to being so new to this branch of AI.

That said, just today you're now the second person that's implied that there's far better video AI on the horizon. What am I missing? What's new? I wanna know about it. You mention something about video with stable diffusion in your description on this (I had a brisk skim before typing this). I'd love it if there was a good workflow for SDXL to do videos via consecutive image generation.

I'm sure it could even be done by linking existing components, like, vision model, clip model/s, and frame interpolation(for mapping out logical movements/motion within the scene). Though, I'd really like if they could use illustrious instead of the base sdxl model/s.

Cuz I've been using illustrious lately, and realizing, it's really damn good.. Like, to the extent that I question if it could outdo flux. It has the one thing that SDXL lost during the major pruning that happened between 0.9 and 1.0, it has the ability to generate lifelike realism in images, but without the poor anatomy that 0.9 had(still struggles with hands tho :-( .). Like, real/proper shading, not this hyperrealism, studio refined images put out by SDXL/pony models. Although, you need the right model. A lot of the illustrious models only do anime/cartoon, and some of the realism models are meh..

I use one in particular, that unfortunately was removed from this site, called 'Thrillustrious', and it's realism is unmatched so far in SDXL-based models(that I've seen, apart from *luck* images that are like, 1/1000, or perfect blends of loras/prompts/seeds).

@Lazman LTXV is indeed a meme machine, although some folks do cool stuff with it, but it doesn’t match up to HV and Wan.

Nowadays, WanVideo is the new shiny thing everyone's obsessed with, it delivers better motion and isn't guidance-distilled like HV, so it trains better. However, it's not as good in realistic NSFW as Hunyuan (yet).

It's hard to say what the next video models will be. The most foreseeable new releases until the end of the first half of 2025 are probably Goku, Kandinsky 4, and maybe a new model from Black Forest Labs. But who knows, a new challenger could suddenly arise, just like WanVideo did.

Reusing existing models' components for video seems challenging because the current generation of video models is powered by things like causal 3D VAEs and multimodal diffusion transformers, which provide better temporal coherence. As a result, reusing modules from SDXL-based models is most likely to result in something not better than AnimateDiff, which is also outdated for now.

If you want to stay on the cutting edge, I recommend subscribing to the Banodoco Discord, it's the discussion hub for the most trending video models.

About high memory requirements for video models - this is everyone's pain point right now, and it’s not going to change soon, at least not until scale-wise autoregressive video models are implemented. Personally, I expect that by the end of this year.

Training HV on 16 GB is absolutely possible - check out this guides, for example: https://civitai.com/articles/12954/hunyuan-video-lora-training, https://civitai.com/articles/9798/training-a-lora-for-hunyuan-video-on-windows and there is a lot more on Civitai, just search articles for keywords "hunyuanvideo training".

Basically, you can train on images with 16Gb without hassle. To train on videos, you need to stick to low-res short videos (like <=256p, <=2-3s), but modern video models are really good at learning small details and motion even from low-resolution and short videos because of VAE efficiency and the fact they were pre-trained on millions of low-res videos. You can also use block swapping technique (supported by major trainer toolkits like musubi-tuner and diffusion-pipe), which can drastically reduce memory requirements for training (at the cost of duration).

I have to say, even though I don't use SDXL-based models (after Flux arrived, I decided to stick with it and haven't used SDXL derivatives since), Illustrious still amazes me sometimes - what people manage to create with it is impressive, the crispness of the images it produces can be close to Flux in quality, even though it uses the old SDXL 4-channel VAE (if I'm not mistaken). But I've never been personally interested in realism; I mostly focus on anime/illustration stuff.

Btw I think THRILLustrious is available on SeaArt AI now: https://www.seaart.ai/ru/models/detail/9e75470f77c1cd24a717fbfde98cb975

@seruva19 It's funny, apparently I got it backwards. I had it in my mind that wan was the older one and Hunyuan was the big new thing.

"it's not as good in realistic NSFW as Hunyuan"

OK, guess that explains it, people will flock to the porn generators first.

"reusing modules from SDXL-based models is most likely to result in something not better than AnimateDiff,"

Was animatediff bad? I never did try it.. Was it possible to get it good via better trained loras, or was it fundamentally bad in video gen, like, disappearing limbs and such(which I've even seen from HYV).

"If you want to stay on the cutting edge, I recommend subscribing to the Banodoco Discord, it's the discussion hub for the most trending video models."

TBH, I've got discord, but mostly only cuz I have one friend that uses it. As deceptive as my elongated text communications can be on sites like this, I mostly avoid any form of live communication like the plague, these days. Far too much reasoning behind that to go into detail on this, but suffice to say, my experience with people (in general) has been less than desirable.

"this is everyone's pain point right now, and it’s not going to change soon, at least not until scale-wise autoregressive video models are implemented."

Really have no idea what that is, but anything that reduces Vram req, is a good thing. The unspoken alternate would be if Nvidia, one of the top wealthiest corps on the planet, stopped being cheap-fucks, and released video cards over 16gb vram, for less than 3k.. I mean, significantly more.. I'm just now picturing them releasing the 6000 series cards with 18gb vram.. SMH..

"<=256p"

I think I actually feel pain just looking at that number.. I'm one of those people that feels 4k+ shoulda been a universal mainstream by now for all video.. I mean, at that res, you're basically looking at clumps of pixels, rather than a concept, lol.

"check out this guides, for example:"

I may look up something on youtube if I see enough to notice that it's worth looking into training on my hardware, but the one you linked, instant massive oversized tits /barf.. Look, I've got nothing against women, I am not a misogynist, but as someone that isn't into seeing women that way (as in, I'm sternly against the rampant objectification of women), I pretty much got tit PTSD within 2 months of learning image generation..

From a psychological angle, it's like the dog with the bell thing, like, everytime the guy dings the bell, he smacks the dog over the head with it, then the dog bites off his hand and gains a thirst for human blood, and they gotta put the dog down, then the guy with a missing hand has to pay for the dog's funeral, and .. where TF was I going with this..? Anyways, point is, no boob's for me, thx!

Also, this site has been terrible recently.. I've had to refresh it multiple times just to get it to load the pages/thumbnails properly, like, every time.. (let me know if this is somehow just a 'me' thing, but this is the only side it happens on, the rest work fine).

As for the second one, Windows, yuckie! Lol.. I did use windows for most my life, but I got into Linux for Easydiffusion/A1111 (back in July last year), cuz I heard it was easier to get it set up/working, and haven't looked back since. Linux has it's headaches, but with this OS, at least I know that I own my computer and I have at least some control over the privacy of my data. With windows, the 2 options are, share some of your data, or share all of your data..

Also, Linux has much more control over the OS, and it's SO developer friendly compared to windows.. In windows, people can use it for a lifetime (like I did). and barely know that scripts are a thing, and that we can easily make them to automate any task.. If I'd been using Linux for all these years, I'd have easily been a full fledged dev by now.. But I missed my years of having a better memory, and now I'm having difficulties holding onto new information. I still can, but it's more difficult than before..

"You can also use block swapping technique (supported by major trainer toolkits like musubi-tuner and diffusion-pipe), which can drastically reduce memory requirements for training (at the cost of duration)."

That sounds brilliant, but does it allow for training with larger videos? Also, how many videos do you train with?

Typically time training doesn't really phase me, so long as it's not taking more than 2-3 days, that's my breaking point where I'm like, "OK, I'd like to actually use my PC now..". But, for me, it's more about quality of outcome, than speeding up the process while sacrificing quality. If it takes a couple/few days, but the end result is something I can be proud of, then the sacrifice (of time) was worth it. If it gets done in a few hours, or a day, and results are sub-optimal, then I will not feel good about it, and will be less likely to feel engaged to do further training unless some logical fix can be implemented to increase quality of the next run.

"even though I don't use SDXL-based models (after Flux arrived"

Due to increased hardware requirements, especially in training, there's still a small fraction of the loras for Flux as there is for, maybe even illustrious. So, that's why I still use it, and if you mainly produce anime, I'm surprized you've managed to avoid using it.. realism is flux's wheelhouse, Illustrious is the way to go for producing any range of animated characters/styles.

Personally, I'm a mix. I like to make new things. So, I blend. Real life anime characters(males that is, the female ones have all been done to death). Still working on refining multiple characters in one scene though.. And 100 other things. Actually part of the reason I'm avoiding real-time communications. Cuz there's so many things to do with AI, and I'm even now strongly considering getting a 3D printer. So, I'll have a whole other thing to learn then..

But, I always set aside time for communications like this because they feel less, obligatory, and I set the timeframe for this, whereas real time, I feel like I gotta respond to anyone that talks to me as a matter of common courtesy. I gotta eat something, lol.. Think that's why I'm gettin all rambley.

@Lazman

Was animatediff bad?

It was good, but the underlying technology is too old now to compete with modern video models.

...instant massive oversized tits

Haha, I get what you mean. I also don't like when women are treated like pieces of meat, but I've been involved in generative AI since 2022, so I've probably developed some kind of immunity to even the weirdest kinks people post on Civitai. My brain just filters that stuff out automatically :)

...let me know if this is somehow just a 'me' thing

Absolutely not - it's been everyone's experience on this site over the past few months. It frequently becomes extremely slow.

That sounds brilliant, but does it allow for training with larger videos? Also, how many videos do you train with?

Well, in theory, you can train with videos up to 720p, but you'll need a beefy GPU (like something with 80GB VRAM).

You don't actually need a ton of highres videos to get good results, sometimes 10-20 is enough. For example, in this post: https://www.reddit.com/r/StableDiffusion/comments/1jqhopr/professional_consistency_in_ai_video_training_wan/ someone trained a pretty solid LoRA using just 30 videos and 10 images.

...if you mainly produce anime, I'm surprized you've managed to avoid using it

It's not that I actively avoid it - I just like trying new things. I really loved Flux the first time I tried it, so I decided to switch. But that's just my personal bias, I'm not saying Illustrious-based models are bad.

@seruva19 "It was good, but the underlying technology is too old now to compete with modern video models."

I suppose what I meant was, could it do well with a good model and well trained lora/s? Cuz liek you said, hardware is the major pain point, so getting it so relatively tiny SDXL/Illustrious models could do video, given that even I can train a decent lora, imho, it would be huge if the output could be any good.

Like, one of those, could be better, not because the base is better, but because it doesn't require millionaire hardware to train in respectable resolutions, type things. So, a lot more people would be working to make it better, and not just corporations drip feeding us updates that we can barely make use of because of the hardware requirements to even do inference.

"My brain just filters that stuff out automatically"

Lucky.. I'm autistic, so my brain is the opposite of that. If something bothers me, it will continue to bother me exponentially worse until it is solved, which is very irritating when it comes to things that literally can't/won't be solved..

"but you'll need a beefy GPU (like something with 80GB VRAM)."

reminds me of the kinda responses I get when I talk to ChatGPT about this kinda thing. It just kinda casually mentions high cost hardware like I'm Warren Buffet or something.. Tbh, I'm not even sure if the power in this old-ass apartment could support me running much more hardware than I am.. I just pray we don't have knob and tube wiring in this place, or it'll probably be an inevitability that I'll end up inadvertently burning it down eventually.. On that note, planning on buying a 3D printer tomorrow, a big enclosed one. >,<

"It's not that I actively avoid it - I just like trying new things"

Did you mean to say something else, or am I just reading this wrong, lol.. Cuz it looks like a total contradiction to me..

@Lazman

Did you mean to say something else, or am I just reading this wrong

Oh, I see, I was a bit vague there :)

What I meant is that Illustrious is an SDXL-based model and didn't really introduce substantially new ideas, while Flux felt like a breath of fresh air. It brought advanced prompt understanding, much better prompt coherence, and was something new overall.

...reminds me of the kinda responses I get when I talk to ChatGPT

Nah, I don’t use LLMs to reply to real people - that'd feel kinda insulting to them, honestly :)

But well, that's the official answer the Wan developers give when people ask about fine-tuning requirements: they say you'll need no less than 8xA100 GPUs to do a "real" training of their model.

@seruva19 Flux is alright, good quality, better prompt adherence, less borked hands. Though the loras for it are much more limited in range, mostly realistic, with a blend of quirky/weird, and a few cool ones. I mainly enjoy making anime characters look as realistic as possible, without losing their reconizable anime appearance, or hitting that uncanny valley look. Been tryin pretty hard to get a good Wilykat from Thundercats 2011, but the loras that exist for him, although decent, have some room for improvement, so I'm working on training a lora for him with screenshots I've pulled from the series.

I haven't managed to successfully train a flux lora yet, as in, onetrainer keeps having an error when I try(using nf4/gguf). So that's another reason I haven't really gotten into using it. That, and that it takes 1-6 minutes to generate an image (depending on CFG/steps).

"Nah, I don’t use LLMs to reply to real people - that'd feel kinda insulting to them, honestly :)

But well, that's the official answer the Wan developers give when people ask about fine-tuning requirements: they say you'll need no less than 8xA100 GPUs to do a "real" training of their model."

True, I feel the same. And yea, that sounds about right(what the WAN devs would say, since they likely all have top notch PCs).

TBH, I woulda laughed if you'd facetiously ended your response with "That's all, if there's anything else I can do to help, just let me know!"

Though, I wasn't suggesting that you were using ChatGPT(frankly, if you were. I'd expect much longer responses.. That thing seems to dump max tokens every time unless I tell it to 'be concise'). I was just speaking conversely, cuz ChatGPT always does that to me, I've told it multiple times, and I think even put it into the memory that I'm a broke-ass, but it still gives me richy suggestions, lol.

@Lazman Flux seriously impressed me when it came out, it had such great sharpness of the output, prompt adherence, good anatomy! And styles (including 2D animation) were trained easily. Flux is not perfect, it has strong internal bias towards a lot of things, and I don't like that it can sometimes fallback to realistic or 3D styles even with properly trained LoRA.

As for Flux training, I use AI Toolkit, could not really get along with other trainers.

@seruva19 "AI toolkit"

Is that like, some form of CLI tool? honestly, as another side project, I've been working on developing a node-based AI training program, but, I'm not a dev, so I've had to chip away at it with ChatGPT. And the free ChatGPT isn't very accurate with coding, so it's a lot of debugging. So, I've put it off for now until I can hopefully get my local LLM to code well enough to make the process more efficient.

I did manage to code a decent full GUI(not webui) for loading and inference of LLM's and loras, using PYQT. Still got some things I need to add to it though, like an optimizer, so I can load larger LLMs with more parameters and higher precision. But it will help if I can fix it's ability to code well first. So, I'm considering attempting to train a qwen-coder lora, if I can find a good dataset for PYQT/python code.

@Lazman AI Toolkit is https://github.com/ostris/ai-toolkit, yes, it initially was CLI-only, but now it has its own GUI too (but I never used it, so don't have any opinion about it).

Goro Miyazaki might finally be able to make his father proud with this

cool!

Nice work. I've been finding slightly lower learning rate (e.g. 2e-5 but more epochs e.g. 60) is helping with HV loras. I wonder if it would help to train on short (e.g. 1-3s) vids rather than just the images.

That might be true. The creator of a very good pixel-style LoRA mentioned that long training with a low learning rate improved style reproduction success (https://civitai.com/models/1144459/pixel-style-hunyuan-video-model?modelVersionId=1346556&dialog=commentThread&commentId=661741&highlight=678418).

Since I'm not satisfied with v0.3 (which has many artifacts and style inconsistencies), I'm currently retraining it using a fairly large (for LoRA) mixed dataset (images + very short high-resolution videos, up to 32 frames). However, I'm actually using a higher learning rate (2e-4) than before. I want to test some of my assumptions regarding loss/average changes throughout the entire training process (nothing special, just some intuitions) while hoping it won't overfit 🙂. And after this, I plan to experiment with lower learning rates on smaller video-only datasets.

upd 08/02. My assumptions were wrong :( Fine-tuning with very short highres videos led to "fast forward" effect for clips generated with v0.6 LoRA (or, maybe, it was because I overexperimented with flow shift value during training). So, for v0.7 I will instead use longer duration small resolution videos mixed with some highres images. And with lower LR.

Good luck! I've been trying video only but suspect mp4 artifacts are hurting quality where there's a lot of movement. Might try adding images.

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.