What is DPO?

DPO is Direct Preference Optimization, the name given to the process whereby a diffusion model is finetuned based on human-chosen images. Meihua Dang et. al. have trained Stable Diffusion 1.5 and Stable Diffusion XL using this method and the Pick-a-Pic v2 Dataset, which can be found at https://huggingface.co/datasets/yuvalkirstain/pickapic_v2, and wrote a paper about it at https://huggingface.co/papers/2311.12908.

What does it Do?

The trained DPO models have been observed to produce higher quality images than their untuned counterparts, with a significant emphasis on the adherence of the model to your prompt. These LoRA can bring that prompt adherence to other fine-tuned Stable Diffusion models.

Who Trained This?

These LoRA are based on the works of Meihua Dang (https://huggingface.co/mhdang) at

https://huggingface.co/mhdang/dpo-sdxl-text2image-v1 and https://huggingface.co/mhdang/dpo-sd1.5-text2image-v1, licensed under OpenRail++.

How were these LoRA Made?

They were created using Kohya SS by extracting them from other OpenRail++ licensed checkpoints on CivitAI and HuggingFace.

1.5: https://civarchive.com/models/240850/sd15-direct-preference-optimization-dpo extracted from https://huggingface.co/fp16-guy/Stable-Diffusion-v1-5_fp16_cleaned/blob/main/sd_1.5.safetensors.

XL: https://civarchive.com/models/238319/sd-xl-dpo-finetune-direct-preference-optimization extracted from https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0/blob/main/sd_xl_base_1.0_0.9vae.safetensors

These are also hosted on HuggingFace at https://huggingface.co/benjamin-paine/sd-dpo-offsets/

Description

FAQ

Comments (28)

Sorry, I'm too lazy to look up external links. Can you please write one sentence that explains what this actually does?

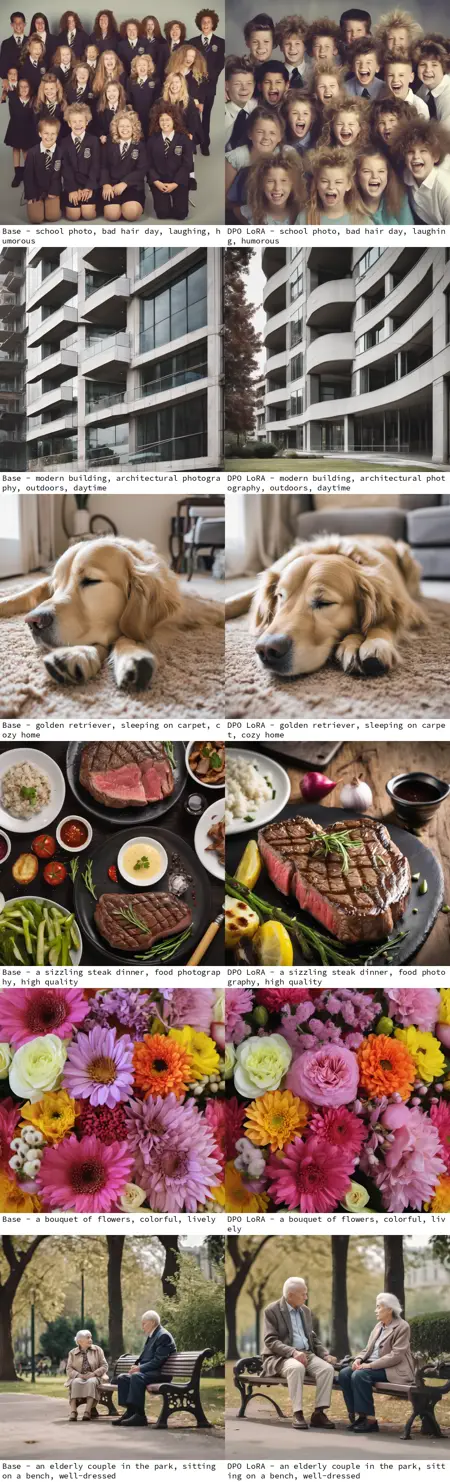

Edit: OK, I ended up reading the external link. Maybe a before and after comparison image would be helpful to demonstrate the impact it has and how it improves the image to match human preferences.

I get the feeling it's one of those things where "If you have to ask, you're not nerdy enough to use it."

Hello, thank you for the suggestions, my apologies for the confusion. I've edited in a blurb with some details about the model and it's intended effects. The authors provided this comparison which isn't great but is a start: https://huggingface.co/mhdang/dpo-sdxl-text2image-v1/blob/main/01.gif. I'm working on better comparisons as we speak, I'll post some as soon as they're done.

Thanks, I appreciate it! Merry Christmas!

So, I guess this is some kind of detail enhancer.

no, it helps with prompt accuracy, mainly.

basically , by looking to this page one thing come in mind that his generates only photos of cats and dogs..

dam* lack of samples and clear explanations.

Hello, I have added more examples of some varying kinds of prompts for both LoRA. The effects of the training are difficult to summarize and I myself haven't explored the edges of the model or the LoRA yet (I did not train it, I just extracted the LoRA.)

Rather than a specific style or intention, the model was trained on 850,000 "A vs. B" image pairs that were chosen by humans. So all we can really say for sure that the training did was make the images "more aligned with human preferences." What that means in practice will only really bear out with time.

What settings do you recommend for using this Lora?

It's really good so far actually!

"finetuned based on human-chosen images" Does this imply that other finetunes were trained with images chosen by... animals? ;> At random?

LAION's common crawl could be considered non-human chosen. But many finetuners claim they chose only the best images, which I highly doubted-particularly those with hundreds of thousands. One early model I used to joke might've been trained on images of mail order brides. ;> Though those could still be considered human-chosen.

Most datasets are curated by algorithms, not people (or animals or randomness.) You can argue that the algorithms were written by people but that discussion seems unproductive, the point of PickAPic is that it's a massive dataset that is 100% human curated, and that is a rarity.

I think both of you are missing the point. DPO can be automated, it doesn't have to rely on human input. Likewise, a dataset isn't necessarily cherry-picked by hand. DPO optimizes the PREFERENCE, because it trains the model with differential data, much like RLHF. The difference is, RLHF uses a reward model trained to prefer the same things the authors prefer, and DPO doesn't use this, it uses the trained model itself. I don't know how Diffusion-DPO works in image generation models, but in LLMs it relies on token probabilities - the higher the probability of the desired output, the higher the reward. In layman terms, ofc. Hence DIRECT preference optimization. But the source of preference is arbitrary. Preference is a bias, and bias is arbitrary more often than not. We can use DPO to appeal to humans and use human data. Or we can use DPO to make a model work similarly to another model, like Midjourney, another diffusion algorithm. The source of preference in irrelevant in this case.

@Erilaz Interesting. Thanks for your reply.

Great work! Really excellent results

for all of the people who are confused about what this is, im no expert or anything, but the lora is mainly used to be baked into other models, and its effect is that it makes stable diffusion take your prompt more seriously. the lora is mainly means for model trainers, and will help with using more natural language in your prompts instead of 1 million keywords.

hope this comment was useful. again, im not an expert, but people seem to be very confused about this, and i dont want that to take away from how cool this really is!

does it work with Pony V6 ?

Unfortunately I don't see significant difference. I tested simple sentence and model with DPO and without it does good job - https://civitai.com/images/8497276

Thanks very much, it makes my sdxl model listen better to prompts

may i know what is the best setting for the lora strength?

Are we supposed to use this as a LoRA in our image generations to make the images better? Or is this just for creators to put in their models?

I have tested with and without. For me, I find it follows my prompts better. As well as removing those unsightly bumps on bodies, creating a smoother look.. But, then again, it could just be me.

@Trixies Sweet! tnx

Brilliant.

Details

Files

sd_xl_dpo_lora_v1.safetensors

Mirrors

sd_xl_dpo_lora_v1.safetensors

dpo_sdxl.safetensors

sd_xl_dpo_lora_v1.safetensors

sd_xl_dpo_lora_v1.safetensors

sd_xl_dpo_lora_v1.safetensors

sd_xl_dpo_lora_v1.safetensors

sd_xl_dpo_lora_v1.safetensors

sd_xl_dpo_lora_v1.safetensors

sd_xl_dpo_lora_v1.safetensors

sd_xl_dpo_lora.safetensors

sd_xl_dpo_lora_v1.safetensors

sd_xl_dpo_lora_v1.safetensors

sd_xl_dpo_lora_v1.safetensors

sd_xl_dpo_lora_v1.safetensors

sd_xl_dpo_lora_v1.safetensors

sd_xl_dpo_lora_v1.safetensors

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.